THE CURATED LOG V

By MITO Universe - @mitofilms

Welcome to MITO Universe.

Cameras? Optional now. As AI tools dissolve technical barriers, we’re left with the raw core of creation: intention.

This week’s picks show how working within limits sparks real innovation. The tools evolve, but the goal remains constant: developing projects that resonate.

Here’s what’s pushing creative boundaries right now.

SELECTED CREATORS

AI.S.A.M / @ samfinn.studio

AI.S.A.M, the London-based artist, operates at the intersection of constructed narratives and documentary authenticity.

He crafts still and moving images that evoke the language of social documentary, editorial fashion, and even Baroque painting—yet none are real. Drawing from the visual codes of street photography, analog film, and archival aesthetics, his AI-generated work poses a quiet challenge: What do we accept as real when the emotion feels authentic?

Beyond personal exploration, AI.S.A.M has brought his signature vision to campaigns for brands like Prada, SKIMS, and Dilara Findikoglu, proving that synthetic image-making carries both commercial impact and conceptual depth.

YZA VOKU / @yzavoku

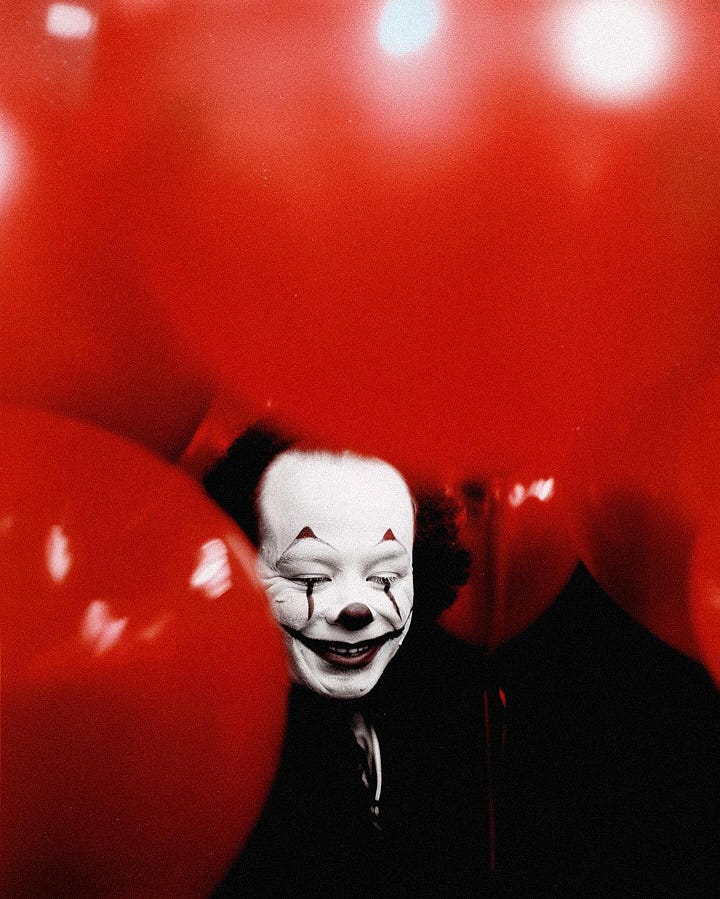

Madrid-based visual artist and director YZA Voku crafts a controlled visual language where tension takes precedence over realism.

With a background in photography, filmmaking, and art direction, he explores new modes of expression through generative AI. His work is defined by a rigorous chromatic identity, a clear influence from surrealist cinema, film photography, and a refined editorial aesthetic — all anchored by a distinctive personal iconography.

From Sutura, his experimental short film, to branded work like The Weeknd’s São Paulo Streaming Teaser, Voku’s imagery thrives on sharp contrasts, symbolic precision, and a heightened sense of atmosphere — revealing how visual intensity and stylized constraint can become a narrative force in themselves.

WHAT’S NEW

HeyGen’s New AI Studio Puts Storytelling on Script-Level Autopilot

HeyGen has introduced AI Studio, a new editing environment that allows users to direct AI-generated videos with precise control over tone, expression, gestures, and B-roll — all without a camera or traditional editing skills. Built for “human-first” storytelling, the platform prioritizes avatars that are expressive, engaging, and true to your own voice and motion. Users can map gestures to script cues, preview avatar movements, and even translate videos into 170+ languages using their digital twin.

More than a production tool, HeyGen AI Studio points toward a near future where creating high-quality, multi-language, avatar-led content becomes frictionless — offering a scalable solution for anyone who wants to appear on camera, without ever stepping in front of one.

Smarter, faster, lighter: Flux.1 upgrade

In collaboration with NVIDIA, Black Forest Labs has fine-tuned its open-source image-to-image model for real-time, step-based editing with powerful new performance enhancements.

By combining generative and editing capabilities into one intuitive flow, Flux.1 now lets users make incremental, high-precision changes using natural language or visual references — no complicated interfaces, masks, or layers required.

The latest update introduces low-precision quantization via TensorRT, dramatically improving inference speed (up to 2x faster) and reducing VRAM consumption. This means creators can now work more fluidly with high-resolution images and multi-step edits, even on limited hardware.

These improvements don’t just streamline the editing process — they change how we think about iterative, high-fidelity image generation. Especially useful for concept artists, illustrators, and narrative designers, the model also supports style transfer, character consistency, and localized edits in real time.

With open weights available on Hugging Face and plug-and-play integration into ComfyUI, Flux.1 is shaping up to be a flagship tool for anyone looking to bring precision and creativity into a single, seamless AI workflow.

Google’s Gemma 3n Brings Multimodal AI to Mobile

Google’s latest open-source model, Gemma 3n, brings powerful multimodal capabilities — including vision, audio, video, and text — to devices with as little as 2GB of RAM.

Unlike its proprietary sibling Gemini, Gemma is designed for researchers and developers seeking accessible, fine-tunable tools. Built on a new architecture called MatFormer, Gemma 3n adapts its size depending on the task — like a nesting doll — optimizing memory use without sacrificing performance. Its efficient audio and vision encoders allow for on-device voice recognition, video processing at 60 FPS, and multilingual support across 140+ languages, with full local execution.

Already available on Hugging Face, Kaggle, and Google AI Studio, Gemma 3n signals a shift toward more private, flexible, and efficient AI workflows — empowering developers to build intelligent tools that run off the cloud, at the edge.

KEY VISUAL

Glitching the Unreal by Italian AI artist katsukokoiso.ai is an existentialist and hypnotic journey exploring fictional paths and the concept of dichotomy.

These pieces manipulate and distort visual elements to challenge our perceptions of authenticity. Each frame oscillates between coherence and disintegration, mirroring the fundamental duality at the heart of our digital age—the simultaneous longing for and distrust of technological perfection.

Every unstable pixel and warped frame cracks open new questions about truth in the digital age.

That’s all for now — we’ll be back in your inbox next Monday.