THE CURATED LOG XX

By MITO Universe - @mito.universe

Welcome back to MITO Universe.

Three new signals, one direction of travel: Runway powers an Amazon Prime hit by fusing AI with choreography and weather; proof that imagination scales when pipelines are hybrid. Universal Music Group with Stability AI codifies licensed, artist-first models; the creative economy finally begins to pay its sources. Figma folds Weavy into Figma Weave, bringing node-based media to the canvas, while Perplexity absorbs Visual Electric and Krea bankrolls real-time creation.

Together they outline the same sensibility we champion: editorial restraint, architectural light, photoreal tactility with a dream-side current.

Less spectacle, more control. Systems that let nuance breathe.

SELECTED CREATORS

Rotem Goeta-Mitz / @rotemitz

We read Rotem Goeta work through structure first: garments as volumes, jewelry as micro-architecture, bodies placed against disciplined lines and natural light. The palette stays intentional; skin, stone, metal, wood, with occasional chromatic punctum. Her images sit in déjà rêvé: photoreal surfaces veiled by a slight oneiric haze, where memory drives the frame. Modernist rigor; like Mies or Le Corbusier’s, meets editorial sensitivity and the blur becomes dramaturgy.

Goeta’s studio, Future Positive, operates as a couture-grade pipeline for AI and 3D. Physical products are embedded inside synthetic scenes so the fiction sells truth: fabrics read tactile, metals hold weight, light behaves like architecture. She composes in sequences, shot-to-shot consistency, gaze continuity, and spatial rhythm. The result: images that feel discovered, not generated.

Editorially, she trades noise for clarity; clean geometry, generous negative space, gestures held just past breath. Themes recur: thresholds and windows, drape versus edge, self-possession rather than performance. Museum and fashion-week contexts confirm reach, but the signature is quieter: a calibrated intimacy that lets brands speak in a new register; future-literate, emotionally precise, technically exact.

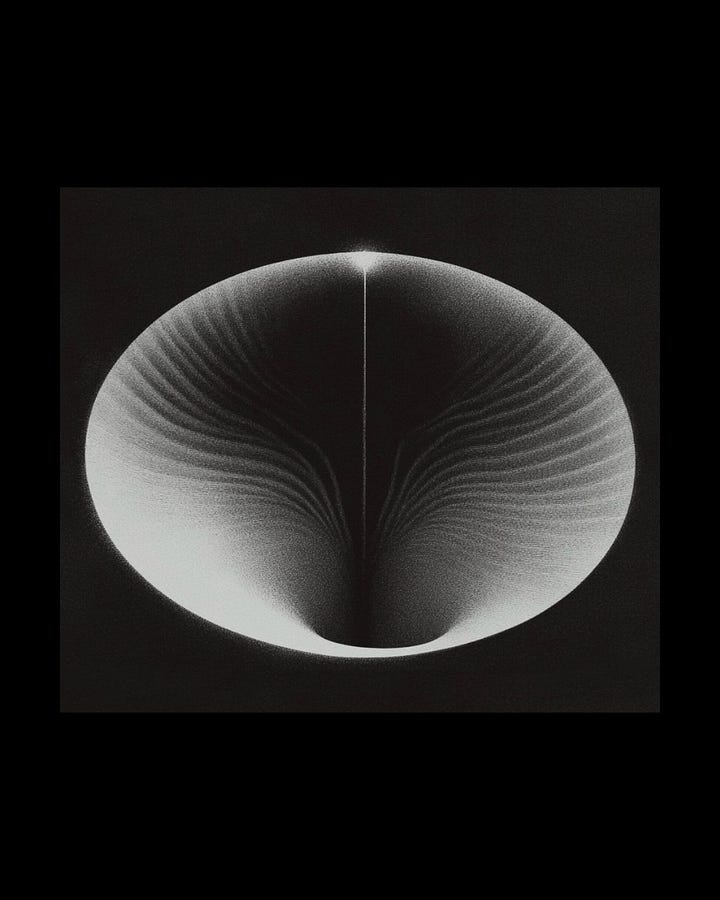

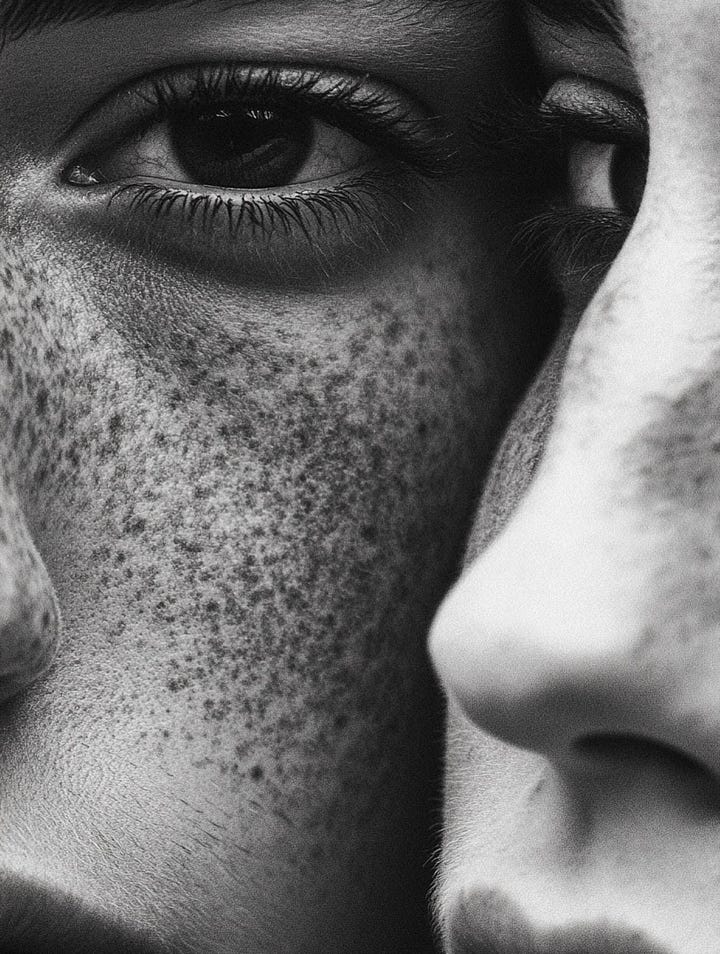

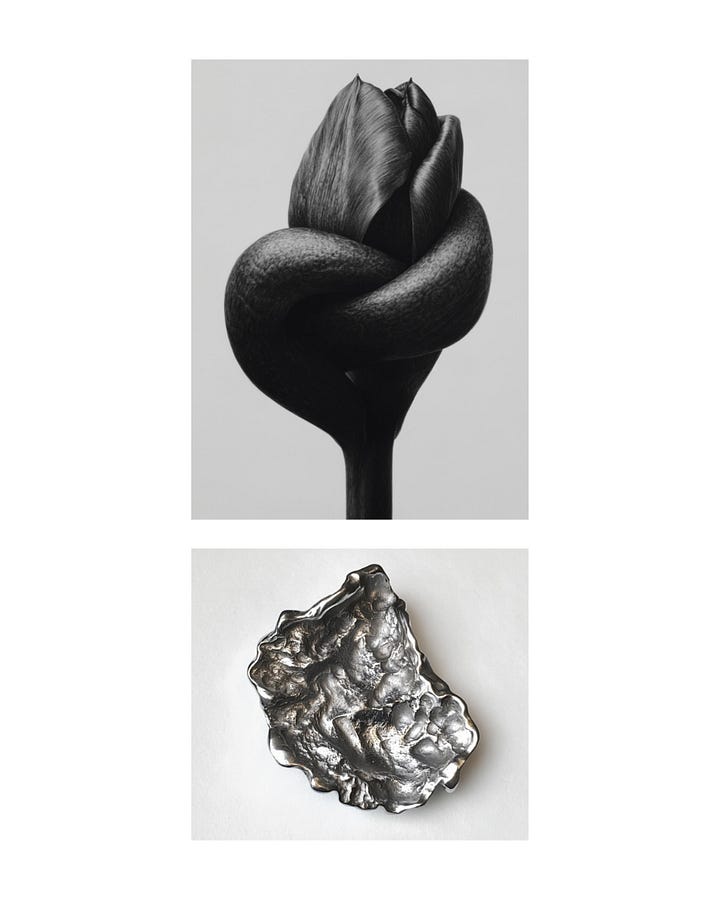

CONSEQUA / @__consequa

For Consequa, the Paris-based AI image studio, monochrome goes first. Zero noise. Skin, metal, petal; three materials, one dramaturgy. Close crops carry heat: freckles as constellations, lips at the edge of a cigarette, an iris held like a secret. Then the pivot; botanicals and jewelry staged with surgical restraint: a tulip pierced by a ring, a tomato wearing diamonds, x-ray blossoms hovering in milk light. Organic matter becomes pedestal; luxury feels absolutely inevitable.

Composition is bookish. Diptychs and generous negative space create a page rhythm; images speak in human/flower, wood/hair, curve/edge. Light is architectural, almost didactic: gloss where metal needs to breathe, velvet on skin, a soft flare to suggest time passing. Grain is chosen, not added. The black background isn’t mood; it’s a control surface.

Technically, we sense a hybrid lab; diffusion for ideation and morphologies, high-fidelity retouch and CGI for surface truth, a printmaker’s eye for tonal scale. Consistency across sets is the signature: materials repeat, gestures reduce, meaning accrues.

The stance is editorial yet commercial: beauty and fine jewelry read with museum calm, fashion with 90s precision. No baroque AI fireworks. Just calibrated intimacy, modern still lifes, and portraits that behave like objects. A language for quiet luxury, future-literate and exact.

WHAT’S NEW

Runway to the Throne: AI Crowned “House of David” on Amazon Prime

Showrunner Jon Erwin and Vū’s Tim Moore reveal how generative tools—especially Runway—powered Episode Six’s most complex sequences: photoreal characters, rain, smoke, wind, even feathered wings, with consistency across shots. The hybrid AI + traditional pipeline cut roughly five months of post, delivering 432 minutes across eight episodes and helping the series reach 22 million viewers in 17 days; and rise to #1 on Amazon Prime after the finale. For Erwin, the payoff is creative velocity: previs to final in minutes, armies of thousands on demand, and a workflow tethered directly to imagination.

Universal Music Group x Stability AI: Setting an Artist-First, Licensed Playbook for Generative Music

Universal Music Group and Stability AI have struck a landmark pact (Oct 30, 2025) to co-develop fully licensed, commercially safe AI music tools. Built around artist participation and feedback loops, the models will train only on authorized data with compensation for contributors; expanding the framework UMG established in its recent Udio deal. Stability brings Stable Audio and diffusion expertise. Beyond tooling, the alliance aims to set ethical standards for discovery, production, and royalties; shaping how creative rights are protected as AI spreads across entertainment.

Figma buys Weavy to launch “Figma Weave,” bringing node-based AI media into the design canvas

Figma has acquired Weavy (20-person team, $4M seed, 2024) and will run it as a stand-alone product before folding it into the Figma Weave brand. Weavy’s infinite-canvas, node-based workflow chains multiple models (Sora, Veo, Seedance; Flux, Ideogram, Nano Banana, Seedream) with pro layer edits, lighting and angle controls; letting designers branch, remix and refine from prompt to polished asset. The move signals accelerating consolidation in AI design tools (e.g., Perplexity/Visual Electric; Krea’s $83M raise) and pushes Figma deeper into AI-native media generation.

KEY VISUAL

Masaki Mizuno / @mizuno.m.masaki

A city that bends to a body. The tunnel ceiling ripples like tin under heat; hazard chevrons soften into surf. Lamps yaw, stairs shimmer, bicycles duplicate as if memory lags a few frames behind. Pastel beiges, industrial yellow, powder-blue sky; then a fine RGB fringe at the edges, a reminder: noise is the subject. Not grain; hallucination, harnessed.

The dance is the metronome. Each gesture pushes pressure waves through steel and stucco, turning infrastructure into choreography. Surfaces read wet, elastic, a little feverish. It feels late afternoon; air heavy; time pliable.

Under the hood, live action is relit and “made to dream”; Cosmos-Transfer diffusion for albedo and HDRI shadows, blended back through a classic VFX pipeline. Prompts are clay, not scripture; craft defines the contour of the hallucination.

The result: an incredibly lucid glitch. Human intention shaping latent space until the street answers back. Architecture becomes instrument; and movement, the score.

That’s all for now — we’ll be back in your inbox next week.