THE CURATION LOG II

By MITO Universe - @mitofilms

Welcome back to MITO Universe!

We get it — we’re also mesmerized by Higgsfield’s turning metal edits all over TikTok. But beyond short-lived virality, a growing wave of creators is engaging with generative tools in more intentional ways.

We’re here for them — artists using AI as a method to construct, reimagine, and rethink the built image.

This second issue highlights creators whose work expands spatial language and visual logic.

Let’s get into it.

SELECTED CREATORS

Juan Carlos Beltrán / @jc_______b

Mexican photographer and visual artist Juan Carlos Beltrán approaches generative image-making with the refined prompting of someone who knows the canon — and knows how to challenge it.

While he experiments with different AI engines — Midjourney, Grok, DALL·E, Gemini, — his output reflects a consistent visual vocabulary and a clear point of view. He uses these tools to explore and challenge common perceptions, inviting the viewer to question the authenticity and visual constructs that shape our worldview. His work reflects a constant pursuit of the boundary between what is real and what is artificial.

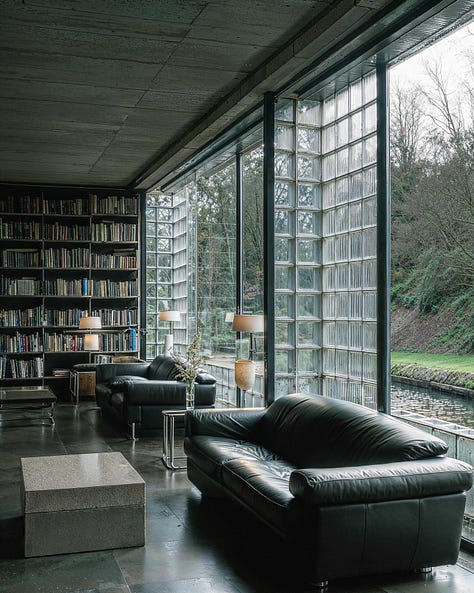

His series INTANGIBLE SPACES explores speculative architectures and interiors that sit somewhere between AI-generated composition, archival fantasy, and constructed memory. These generative spaces are tightly composed, rich in texture and balance, and often indistinguishable from a vintage spread in The World of Interiors.

Beltrán’s work often revolves around organic brutalism, Mexican modernism, and sci-fi-inspired scenarios. Heavy concrete volumes melt into cliffs and dense subtropical vegetation; glass blocks diffuse sunlight across wood-paneled surfaces and greenery floods domestic areas as if it were part of their very structure. The atmosphere throughout remains calm, exquisite, and quietly inhabited.

What Beltrán offers is a body of work that reframes what architectural storytelling through AI can be.

Matthieu Grambert / @matthieugb

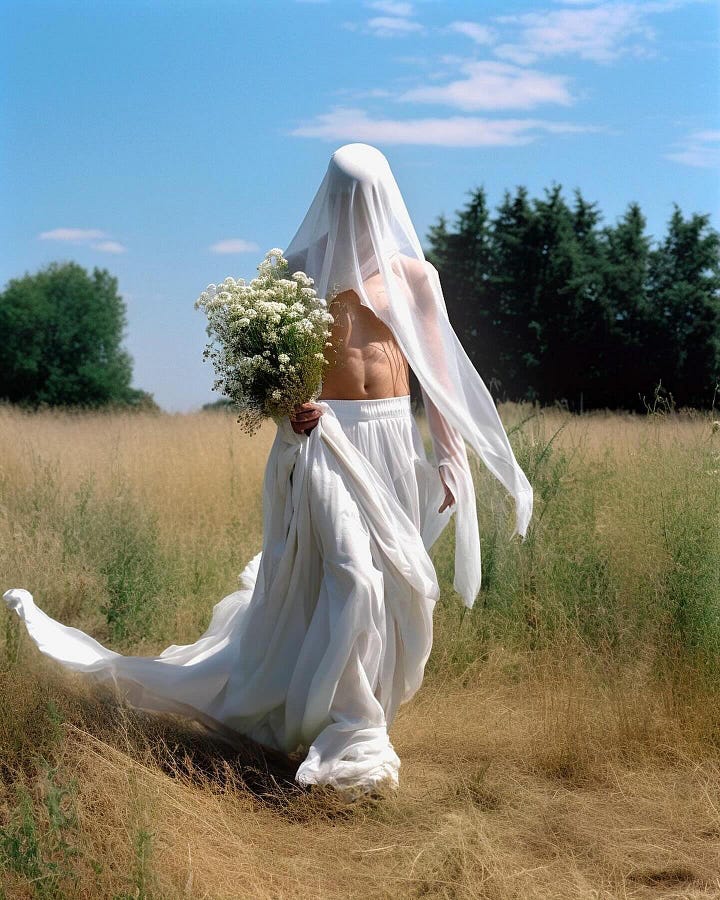

French digital artist Matthieu Grambert applies a strong narrative instinct to his AI-generated work, spanning both video and still image. With a background in the film industry, his practice combines cinematic composition, surreal digital aesthetics, and editorial influence to produce visuals that often resemble isolated frames from an imagined storyline — suggestive, composed, and deliberately open-ended.

His broader generative portfolio reveals a clear editorial direction, marked by sleek compositions, constructed environments, and fashion-forward iconography. This visual language has also translated into commissioned work for clients such as Dior, Etnia Barcelona, Jason Wu, and even Snoop Dogg.

Grambert works with tools such as Midjourney, DALL·E, and Stable Diffusion, building images entirely from scratch — without external prompts or photographic references. While some of his pieces achieve a striking degree of realism and detail, others drift towards memory, fantasy, and digital speculation.

His series The Pics Not Taken, described as “a random latent space exploration of realism, surrealism, and abstraction,” imagines travel photographs from cities never visited — scenes suspended between myth and memory.

Grambert’s work has also been featured in international AI film festivals. In short films like To Wonderland and Gone, he applies full-spectrum AI integration across the entire production pipeline — from ideation and scripting to storyboarding, animation, sound design, and original music.

Already in 2023, in an interview with Pornceptual, Grambert pointed to what has become a defining dynamic in AI-driven creative work: the rapid evolution of tools and workflows that expand what a single creator can achieve. “Every time I gave up on something I couldn’t make, two weeks later I could — thanks to the unprecedented speed of technological progress. […] We will see creators from nowhere go big. The bar for content is going to get very high. One person can now become a photo-video studio. We’ll discover new talents, new arts, mixed old and new — it’s going to be interesting to follow how things develop.”

WHAT’S NEW

Google speeds up AI video creation with Veo 3 Fast

Google just launched Veo 3 Fast, a new iteration of its AI video generator that produces 720p clips more than twice as fast as the previous version. Available to both Gemini Pro and Flow Pro users, the update lowers costs and waiting times significantly, allowing creators to iterate faster. This is part of Google's broader push to scale video infrastructure and embed generative tools directly into its core products. Beyond speed, Veo 3 Fast sets the stage for upcoming features like voice prompts and better subtitle rendering. While capped at 720p for now, the promise lies in prototyping ideas quickly, making the tool ideal for experimentation and pre-visualization in creative workflows.

Runway introduces Chat Mode

Runway has launched Chat Mode, a conversational interface that allows users to generate Gen-4 images, videos, and reference materials in a single, streamlined space. This update marks a shift towards more fluid and intuitive creative workflows, giving users end-to-end control without leaving the chat. The feature is now live and available for all users.

KEY VISUAL

13 Ways to Annoy People on the Commute by Ikenna Mokwe. An AI-native short packed with irony and accuracy.

Created by filmmaker and AI Video Lead Ikenna Mokwe (Runway, Higgsfield, Hailuo, Freepik CPP) and produced with a full-stack AI pipeline — Kling, Runway, Veo 2, Luma, Higgsfield, Krea.ai, ElevenLabs, among others — this 2-minute film stages a fictional campaign for the e-bike brand Rollin’ Solo. Through a fast-paced, ironic guide to petty public transport habits, it reframes commuting as a space to reclaim — or abandon altogether — blurring the line between mock commercial and micro-fiction.

That’s a wrap for now — still digging for gems. Stay tuned.